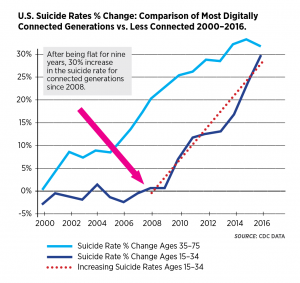

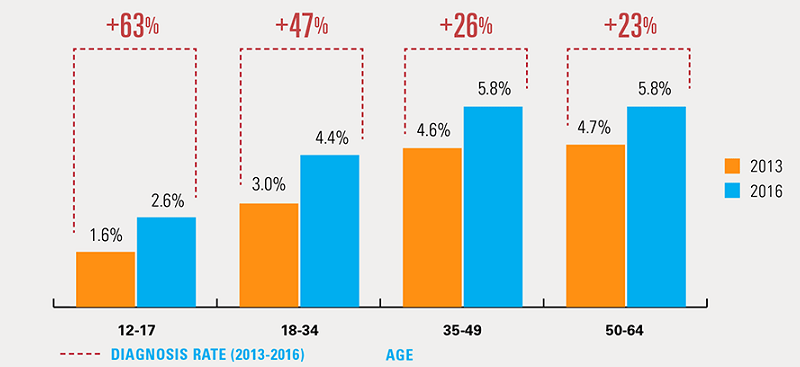

Despite police reports and pediatrician citations, the recent Momo Suicide Challenge was determined by media to be fake. Hoax or not, the resurfacing Momo Suicide Challenge presents parents, teachers and governments with a hard to ignore fact – the internet is not a safe place for children, and we’ve known for years that the internet is not making our children happy. In 2018 Blue Cross Blue Shield Association release findings showing a 64% rise in depression in teens between 2013 and 2016 with the Center for Disease Control reporting in 2016 a concurrent 30% rise in suicide. Jean Twenge’s 2017 research found that teens who spend five or more hours per day on their devices are 71 percent more likely to have one risk factor for suicide regardless of the content consumed. It is becoming increasing harder to ignore the real threats and harm posed to children and teens by spending long durations of unsupervised time in the virtual world. This failure to protect children and teens online originates with absolute disregard for child safety by the internet giants including WhatsApp, Facebook and YouTube. These platforms consistently use faulty and ineffective algorithms to screen content, often relying instead on parents to report inappropriate content…after their children have been exposed. Following is a historical detailing of seemingly forgotten events of equal or greater importance to the Momo Suicide Challenge indicating that we have known for some time the internet is an unsafe and unhappy ‘virtual hole’ which many children and youth unsuspectingly are falling into. The rise in these scary events indicate the urgent need by parents, teachers and governments to get involved and hold our social media and video gaming designers responsible and demand immediate changes to the internet to improve safety for children and youth. Until such time, parents and teachers are wise to prohibit use of unsupervised internet use in homes and school environments. Our children have a right to be safe and happy.

Momo Suicide Challenge was determined by media to be fake. Hoax or not, the resurfacing Momo Suicide Challenge presents parents, teachers and governments with a hard to ignore fact – the internet is not a safe place for children, and we’ve known for years that the internet is not making our children happy. In 2018 Blue Cross Blue Shield Association release findings showing a 64% rise in depression in teens between 2013 and 2016 with the Center for Disease Control reporting in 2016 a concurrent 30% rise in suicide. Jean Twenge’s 2017 research found that teens who spend five or more hours per day on their devices are 71 percent more likely to have one risk factor for suicide regardless of the content consumed. It is becoming increasing harder to ignore the real threats and harm posed to children and teens by spending long durations of unsupervised time in the virtual world. This failure to protect children and teens online originates with absolute disregard for child safety by the internet giants including WhatsApp, Facebook and YouTube. These platforms consistently use faulty and ineffective algorithms to screen content, often relying instead on parents to report inappropriate content…after their children have been exposed. Following is a historical detailing of seemingly forgotten events of equal or greater importance to the Momo Suicide Challenge indicating that we have known for some time the internet is an unsafe and unhappy ‘virtual hole’ which many children and youth unsuspectingly are falling into. The rise in these scary events indicate the urgent need by parents, teachers and governments to get involved and hold our social media and video gaming designers responsible and demand immediate changes to the internet to improve safety for children and youth. Until such time, parents and teachers are wise to prohibit use of unsupervised internet use in homes and school environments. Our children have a right to be safe and happy.

The past few years have been marked by numerous disturbing events which have raised a few eyebrows, but surprisingly garnered only fleeting concerns by parents, teachers and governments. Everyone is likely to remember the 2014 stabbing death by two 12-year-olds in Wisconsin who reported ‘Slender’ (a fictional cartoon character) told them that they needed to kill their friend in order to protect their families. Following the recent Momo Suicide Challenge reports, Common Sense Media detailed that while there are many funny and harmless internet challenges posed to children, there are equally as many harmful ones including the Tide Pod Challenge where children are encouraged to eat Tide Pods, as well as the Choking/Fainting/Pass-Out Challenge where kids either choke other kids, press hard on their chests, or hyperventilate which has resulted in reported deaths. The Blue Whale Suicide Challenge during the summer of 2017 was instigated by a 21-year-old disturbed man who solicited teens through chat sites and enticed them to participate in a series of 50 tasks which ended ultimately with their suicide. While it is uncertain as to the total number of teens who did suicide as a result of this challenge, there are numerous personal stories brought to media by grieving parents to provide credible proof. Unbelievably, The Blue Whale Challenge still exists online as evidenced by Jan. 21, ’19 suicide by a 13-year-old girl in Turkey who shot and killed herself with parents citing her participation in The Blue Whale Challenge.

What many parents seem to miss is that Facebook, Whatsapp, and YouTube platforms themselves often perpetuate harm. Their automated moderation systems fail to flag inappropriate content and their skewed content-recommendation algorithms promote extremist beliefs. These platforms don’t protect kids against cyberbullying from peers, they milk kids under the age of 13 for money and engagement, and they promote truly gruesome content. YouTube continually demonstrates an ongoing failure to keep harmful content off their kid’s channel. Businesses are putting pressure on YouTube to clean up their complicit role in harming children by pulling advertising citing proof of “pedophiles lurking in comments sections”. The disturbing Peppa Pig videos and those videos with suicide instructions are regrettably very real. One suicide instruction video was reported to media by Dr. Hess, pediatrician but is apparently unrelated to Momo. Parent and teacher Emily Cherkin brilliantly states in her ParentMap article “It should not take a viral prank (real or fake) to get us worried about what kids are seeing and doing online” and details the importance of parents and teachers openly discussing with children the “shady side” of the internet.

Parents and teachers do not trust technology giants who are making trillions of dollars through provision of internet content to be responsible. Until government intervenes to with regulations legislation to force the Tech Giants to quit using persuasive addiction design and faulty screening practices, this situation will only get worse. The internet is an ugly place where we do not want to “drop off” our children for the day, or even a minute. Let’s bring this errant Tech Train back to the station and give it some much needed repairs. In the meantime, put down your phone and go outside and play with your kids. They will love you for it.

This article was written by Cris Rowan, BScBi, BScOT a biologist and pediatric occupational therapist passionate about changing the ways in which children use technology. Cris’s website is www.zonein.ca, blog www.movingtolearn.ca, and book www.virtualchild.ca. Cris can be reached at info@zonein.ca.

One Response

Thank you for sharing such a helpful and informative post. A must read for every parent. Keep up the good work.